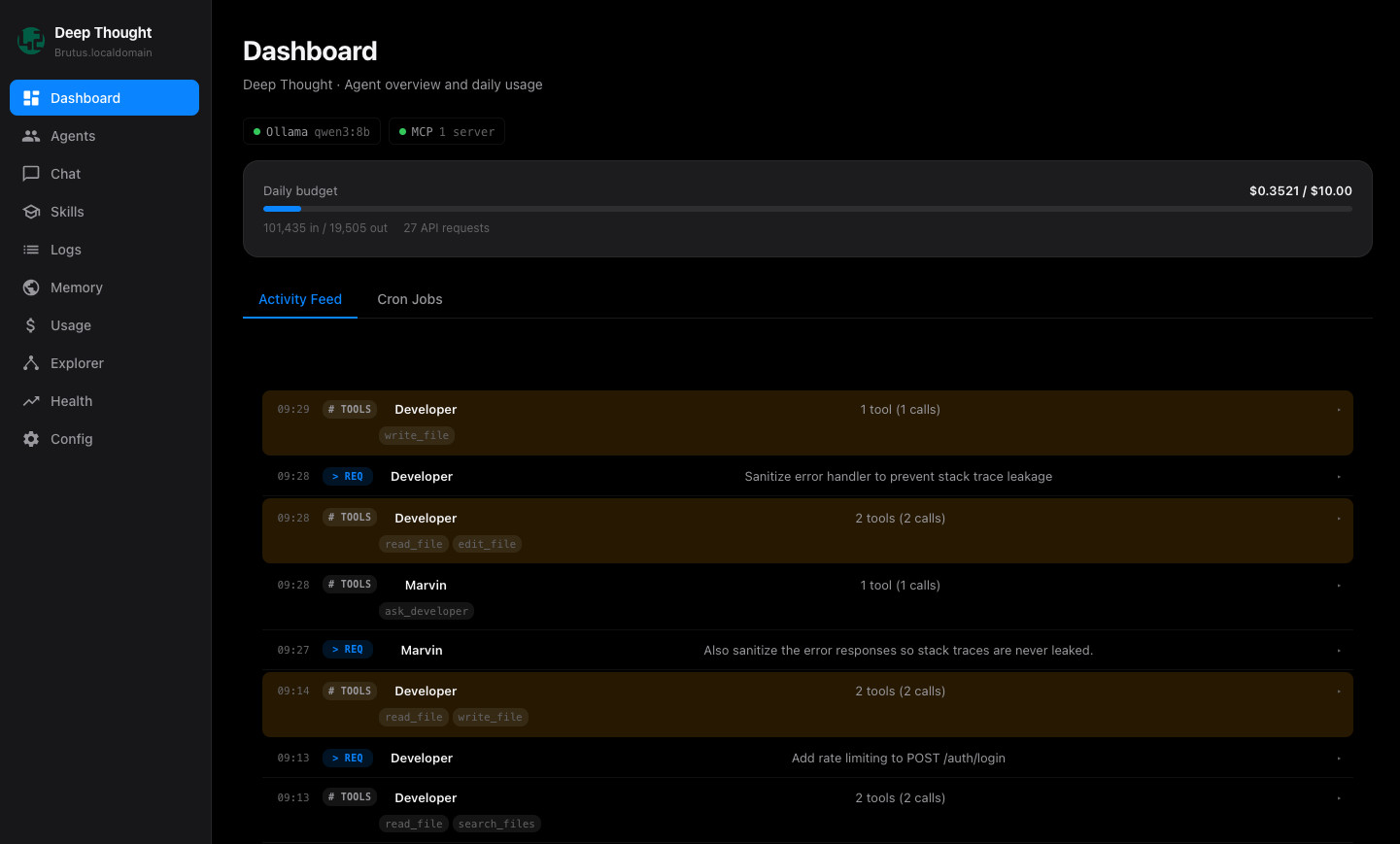

It started with a simple idea: what if I had an AI assistant that understood our business? Two days later, I was staring at a system that manages our time tracking, calculates revenue across currencies, monitors infrastructure, writes code, reviews security, and delegates work between specialized AI agents, all while enforcing a daily budget.

The name? Deep Thought. Because when you build something that tries to answer everything, the reference writes itself.

The Architecture: One Brain, Many Specialists

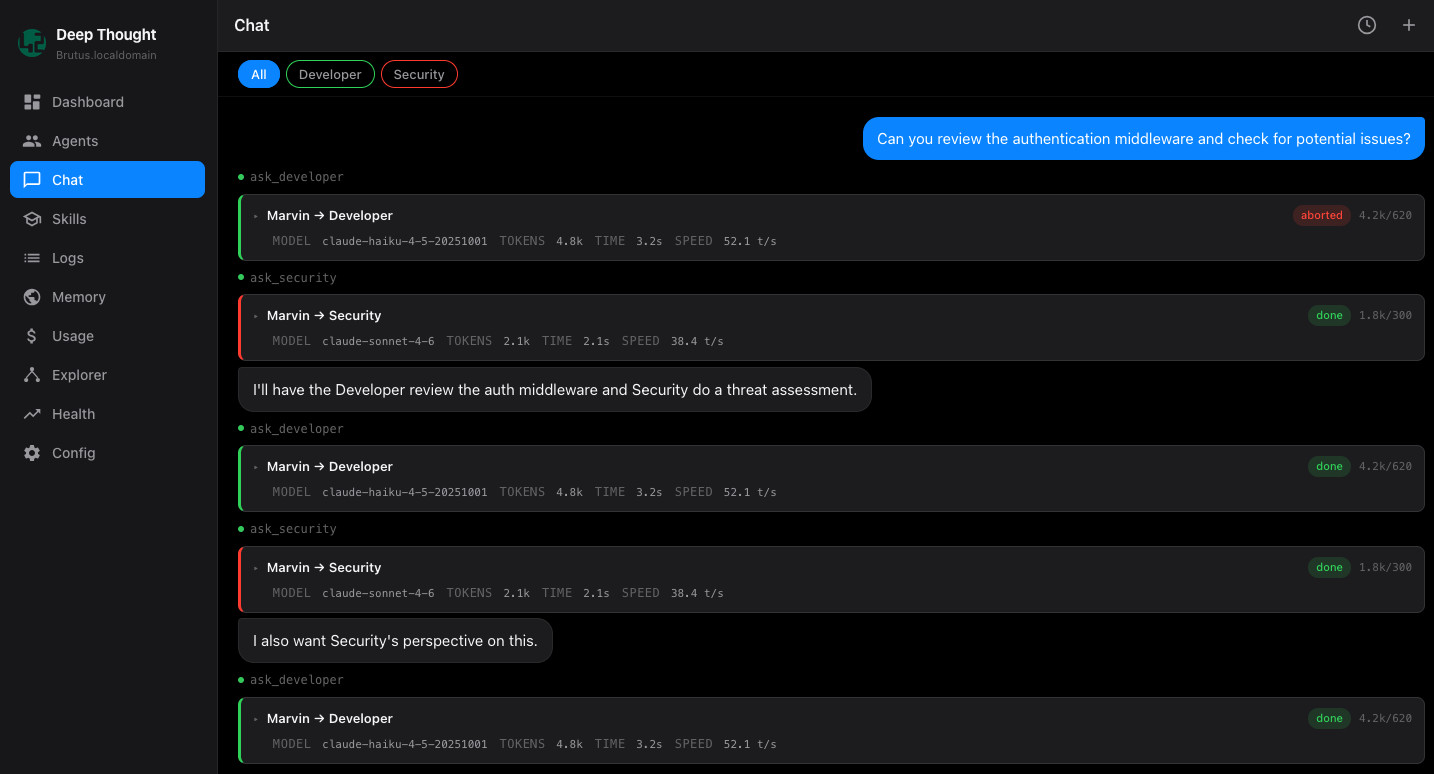

Deep Thought follows a coordinator pattern. There is one lead agent, Marvin, who receives all input and decides what to do with it. General questions, he answers directly. Anything requiring specialized tools or API access, he delegates.

Each specialist agent is self-contained with its own tools, logic, and personality. Drop a new folder into the system, and it auto-discovers it: no registration, no config changes. That design decision turned out to be one of the most valuable. I can prototype a new agent in 20 minutes and have it running the same day.

Different Models for Different Tasks

Not every task needs the same brain, or the same budget.

The Developer agent runs on GPT-4.1 Mini via Microsoft Foundry, keeping all code and data within our own tenant. Security analysis runs on Claude Sonnet, which handles deeper reasoning more effectively. DevOps status checks run on a self-hosted open-source model, because a check that runs 20 times a day costs nothing on your own hardware, and a non-trivial amount if you are calling a premium API every time.

We run a deliberate mix of providers depending on the task and the data involved.

Claude via the Anthropic API handles most reasoning-heavy work: code review, security audits, anything requiring nuance. Azure OpenAI covers operations involving internal company data, where we need the compliance guarantees that come with a proper cloud agreement. For sensitive data that should not leave the building, we use local models: either on dedicated hardware or through an EXO cluster our colleagues have assembled from spare machines. It is scrappy, it is fast enough, and it costs nothing per inference.

Matching the model to the task is what makes the system both trustworthy and affordable to run day to day. The cost difference compounds quickly.

The Three-Tier Security Model

Every tool in the system carries a security classification:

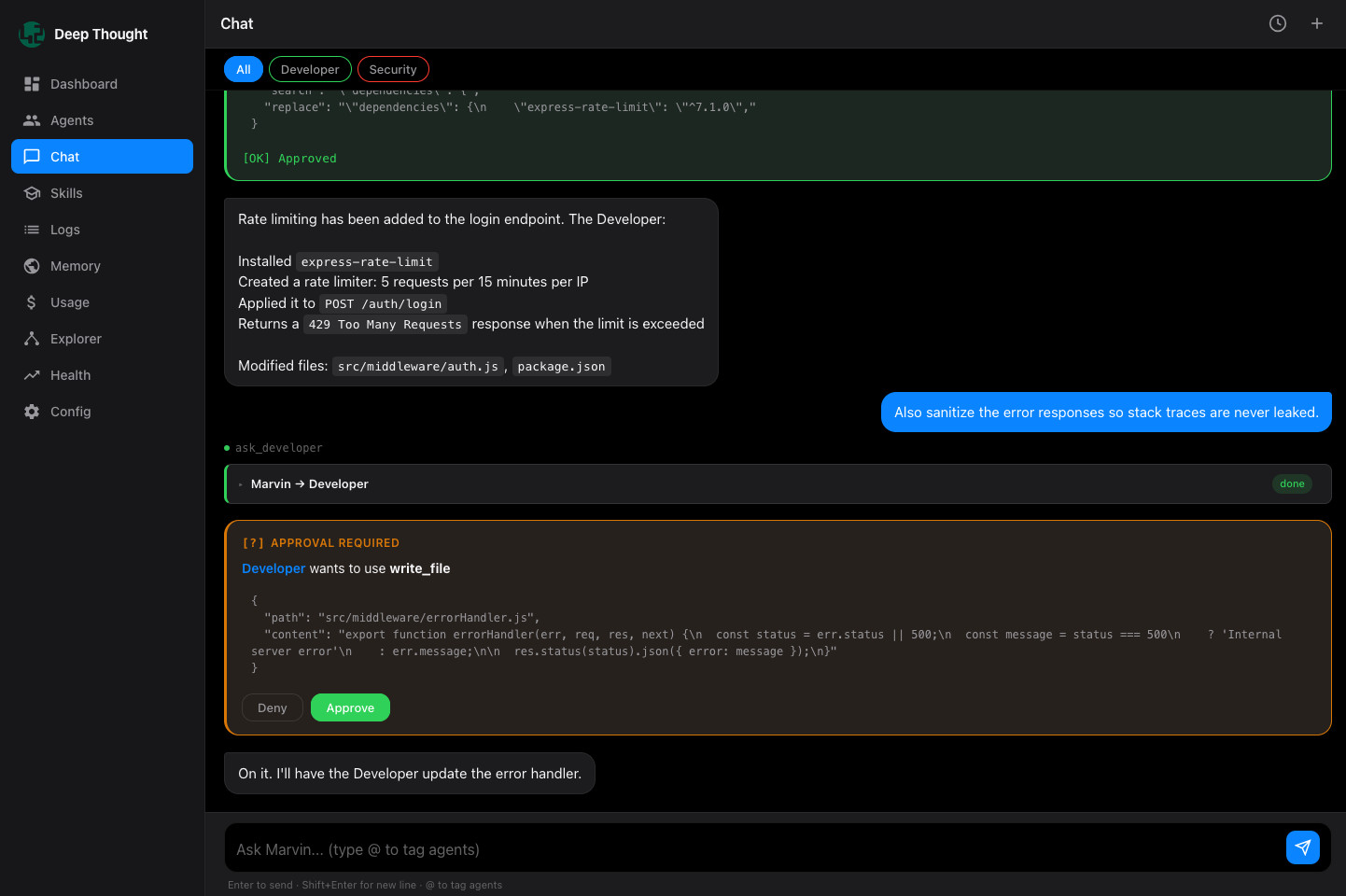

GREEN: Auto-approved. Read operations, lookups, queries.

YELLOW: Requires explicit approval. File writes, shell commands, deployments. A prompt appears before anything happens.

RED: Blocked. No agent can delete a deployment or perform destructive operations without a manual override.

This is the part I am most glad I designed early. It means agents can have real capability while a human stays in the loop for anything that matters. Start with the security model. Everything else follows.

The Finance Agent: Where It Got Real

This is where AI assistance moved from interesting demo to something that genuinely changes how we operate.

The problem: 40+ people logging hours across dozens of projects in our Norwegian accounting system. Different billing models, different rates, currencies in NOK, EUR, and GBP. Management needs weekly revenue reports. Previously that meant exporting a CSV, opening Excel, matching rates, converting currencies, and building pivot tables. Every single week.

The solution: an agent that syncs timesheets, matches each entry to the correct billing rate, respects which hours are billable, converts currencies using live exchange rates, and generates a report automatically.

The first version got the revenue calculation wrong by 40%. It turned out our accounting system has three different pricing models, and some projects store rates per employee rather than per activity. After an hour of debugging, comparing output against a 255-line CSV export line by line, we reached an exact match. The only remaining difference was a 383 NOK variance from using today’s exchange rate rather than the rate from that specific week.

That accuracy did not come easy. But now it runs automatically, every week, at essentially no cost.

Teaching Agents How to Think

One of the more useful patterns in the system is skills: markdown documents injected into an agent’s context based on what it needs to know. Not code. Just knowledge.

We have skills for coding standards, security review checklists, data visualisation formats, and Norwegian compliance conventions. There is also one called token-efficiency that instructs the agent to be concise: get to the point, no repetition, fewest tool calls possible. It has a measurable impact on cost. An agent without it might use 500 tokens to say what a well-prompted agent says in 150.

Each agent gets exactly the knowledge it needs. Nothing more.

The Numbers

DevOps status checks: $0.00 (self-hosted, well – except from power)

Average daily cost: ~$3–5 on active development days

Cost per finance sync: ~$0.02

Cost per revenue report: ~$0.01

A budget cap enforces a daily spend limit in real time. If we hit it, all external API calls stop. No surprise bills, no runaway loops. That one line in every server log, Budget today: $3.64 / $10, is the canary in the coal mine.

What Genuinely Surprised Me

Here’s the part that’s both exciting and slightly uncomfortable.

I spent a day building the finance agent, pair-programming with an AI tool that was itself using the Deep Thought interface to test changes. At one point I asked it to debug why our revenue was off by 145,000 NOK. It wrote a comparison script, parsed the 255-line CSV, identified three distinct categories of errors, and proposed fixes for each. The entire session, from “the number is wrong” to “exact match confirmed”, took about 30 minutes.

That same analysis would have taken me a full day manually. And I probably would not have found the employee-specific rate edge case, because I did not know it existed.

Beyond executing the tasks I assigned, the AI found problems I had not thought to look for.

What I’d Do Differently

Start with the security model. I got lucky designing it early. Bolting it on later would have been harder, and considerably riskier.

Check what the API gives you. Our first approach tried to reconstruct billing rates from rate tables. It was complex, fragile, and wrong 15% of the time. When we found that the accounting API returns the hourly rate directly on each time entry, everything simplified dramatically.

Build in a daily budget cap. Without one, a looping agent could burn through serious money in an afternoon.

Where It’s Going

Deep Thought is an internal proof of concept. It runs. It is used. And it has changed how parts of Fortytwo operate week to week.

The broader vision is straightforward: AI agents handle the repetitive, data-heavy work: syncing timesheets, generating reports, monitoring infrastructure, while people focus on the decisions that require judgment, creativity, and accountability.

There is more to build. But after watching an AI agent debug its own revenue calculations by cross-referencing a CSV against a live API, I am convinced we are closer than most people think.

Oh.. And Everything is also built for CLI